Das größte Hindernis für den Einsatz von Webservices ist das Problem, sie zu testen. Dieser Beitrag beschäftigt sich mit diesem Problem. Die Lösung liegt in der Möglichkeit, die Nutzung der Dienste zu simulieren. Anfragen müssen generiert und Antworten müssen automatisch auf schnelle und zuverlässige Weise validiert werden. Um dies zu erreichen, haben die Autoren ein Werkzeug – WSDLTest – entwickelt, das eine Testdaten-Beschreibungssprache auf Basis von Pre- und Post-Condition-Assertions sowohl für die Generierung von WSDL-Anfragen als auch für die Validierung von WSDL-Antworten verwendet. WSDLTest ist Teil eines größeren komplexen Werkzeugsatzes – DataTest – zur Erstellung und Verarbeitung von Systemtestdaten. Die Architektur und Funktionalität dieses Werkzeugs sowie die Erfahrungen, die bei seiner Verwendung gesammelt wurden, werden hier vorgestellt.

Entstehung des Web

Web-Services werden für das IT-Business immer wichtiger, insbesondere seit dem Aufkommen der serviceorientierten Architektur. IT-Anwender suchen nach einer Möglichkeit, die Flexibilität ihrer IT-Systeme zu erhöhen, um schnell auf Veränderungen in ihrem Geschäftsumfeld reagieren zu können. Wenn ein Mitbewerber mit einem neuen Marketingansatz kommt, müssen sie in der Lage sein, diesem Ansatz in kurzer Zeit zu folgen. Die Anpassungsfähigkeit der IT-Systeme ist überlebenswichtig für ein Unternehmen geworden. Wenn neue Gesetze erlassen werden, wie z.B. der Sorbane Oxley Act oder Basel-II, müssen Unternehmen in der Lage sein, diese innerhalb von Monaten umzusetzen. Änderungen von Gesetzen und Vorschriften lassen sich nicht aufschieben. Sie müssen bis zu einem bestimmten Termin umgesetzt werden, der oft nur noch eine kurze Zeitspanne entfernt ist.

Unter diesem Zeitdruck ist es nicht mehr möglich, lang laufende Projekte zu planen und zu organisieren. Es ist notwendig, innerhalb einer begrenzten Zeit eine funktionierende Lösung zu entwerfen und zu montieren. Diese Forderung nach sofortiger Reaktion setzt die Existenz von wiederverwendbaren Komponenten voraus, die innerhalb eines Standard-Frameworks zusammengeklebt werden können, um einen kundenspezifischen Geschäftsprozess zu unterstützen. Dieses Standard-Framework ist sehr oft eine serviceorientierte Architektur, wie sie von IBM, Oracle und SAP angeboten wird 1. Die Komponenten sind die Webservices; der darüber liegende Geschäftsprozess kann mit der Geschäftsprozessausführungssprache BPEL definiert werden 2. Der Klebstoff für die Bindung des Geschäftsprozesses an die Web-Services sowie für die Verknüpfung der Web-Services untereinander ist die Web Service Description Language – WSDL 3. Die Web-Service-Komponenten selbst stammen aus verschiedenen Quellen. Einige werden gekauft, einige werden aus der Open-Source-Community übernommen, einige werden neu entwickelt und andere werden aus den bestehenden Softwaresystemen übernommen, d.h. sie werden recycelt, um in der neuen Umgebung wiederverwendet zu werden. Normalerweise bedeutet dies, dass sie verpackt werden 4.

Notwendigkeit zum Testen von Web Services

Unabhängig davon, woher sie kommen, kann niemand garantieren, dass die Webservice-Komponenten so funktionieren, wie man es erwartet. Selbst diejenigen, die gekauft werden, passen möglicherweise nicht genau zur gestellten Aufgabe. Die Tatsache, dass sie nicht kompatibel sind, kann zu gravierenden Interaktionsfehlern führen. Die recycelten Komponenten können sogar noch schlimmer sein. Legacy-Programme neigen dazu, viele versteckte Fehler zu enthalten, die sich in einem bestimmten Kontext gegenseitig ausgleichen. Wenn sie jedoch in eine andere Umgebung verschoben werden, um eine etwas andere Funktion auszuführen, treten die Fehler plötzlich an die Oberfläche. Das Gleiche kann mit Open-Source-Komponenten passieren. Perry und Kaiser haben gezeigt, dass die Korrektheit einer Komponente in einer Umgebung nicht für eine andere Umgebung gelten wird. Daher müssen Komponenten für jede Umgebung, in der sie wiederverwendet werden, neu getestet werden 5.

Die spezifischen Probleme, die mit dem Testen von Webanwendungen verbunden sind, wurden von Nguyen angesprochen. Die Komplexität der Architektur mit der Interaktion zwischen verschiedenen verteilten Komponenten – Webbrowser, Webserver, Datenserver, Middleware, Applikationsserver usw. – bringt viele neue Quellen für potenzielle Fehler mit sich. Die vielen möglichen Interaktionen zwischen den Komponenten in Kombination mit den vielen unterschiedlichen Parametern erhöhen auch den Bedarf an mehr Testfällen, was wiederum die Kosten für das Testen in die Höhe treibt. Die einzige Möglichkeit, diese erhöhten Kosten zu bewältigen, ist die Testautomatisierung 6.

Bei selbst entwickelten Diensten ist das Problem der Zuverlässigkeit das gleiche wie bei jeder neuen Software. Sie müssen auf allen Ebenen – auf der Unit-Ebene, auf der Komponenten-Ebene und schließlich auf der System-Ebene – umfangreichen Tests unterzogen werden. Die Erfahrung mit neuen Systemen zeigt, dass die Fehlerrate von neu entwickelter Software zwischen 3 und 6 Fehlern pro 1000 Anweisungen schwankt 7. Diese Fehler müssen gefunden und beseitigt werden, bevor die Software in Produktion geht. Ein wesentlicher Teil dieser Fehler ist auf falsche Annahmen der Entwickler über die Art der Aufgabe und das Verhalten der Umgebung zurückzuführen. Solche Fehler können nur durch Testen in der Zielumgebung aufgedeckt werden – mit Daten, die von anderen erzeugt wurden, die eine andere Perspektive auf die Anforderungen haben. Dies ist der primäre Grund für unabhängige Tester.

Unabhängig davon, woher die Web-Services kommen, sollten sie einen unabhängigen Testprozess durchlaufen, und zwar nicht nur einzeln, sondern auch in Verbindung miteinander. Dieser Prozess sollte gut definiert sein und durch automatisierte Werkzeuge unterstützt werden, so dass er schnell, gründlich und transparent ist. Gerade beim Testen von Webservices ist Transparenz wichtig, damit Testfälle nachvollziehbar sind und Zwischenergebnisse untersucht werden können. Aufgrund der Menge der benötigten Testdaten ist es außerdem notwendig, die Eingaben automatisch zu generieren und die Ausgaben automatisch zu validieren. Durch die Generierung unterschiedlicher Kombinationen von repräsentativen Testdaten wird eine hohe funktionale Abdeckung erreicht. Durch den Vergleich der Testergebnisse mit den erwarteten Ergebnissen wird ein hoher Grad an Korrektheit sichergestellt 8.

Vorhandene Werkzeuge für den Test von Web Services

An Werkzeugen zum Testen von Webservices herrscht kein Mangel. In der Tat ist der Markt voll von ihnen. Das Problem liegt nicht so sehr in der Quantität, sondern in der Qualität der Werkzeuge. Die meisten von ihnen sind Neuentwicklungen, die noch nicht ausgereift sind. Außerdem lassen sie sich nur schwer an die lokalen Gegebenheiten anpassen und verlangen von den Anwendern, dass sie Daten über die Benutzeroberfläche des Web-Clients einreichen. Das Testen über die Benutzeroberfläche ist nicht das effektivste Mittel zum Testen von Webdiensten, wie R. Martin in einem kürzlich erschienenen Beitrag im IEEE Software Magazine hervorhebt. Er schlägt vor, einen Testbus zu verwenden, um die Benutzeroberfläche zu umgehen und die Dienste direkt zu testen 9. Dies ist der Ansatz, der von den Autoren verfolgt wurde.

Ein typisches Werkzeug auf dem Markt ist das Mercury-Tool “Quicktest Professional”. Es erlaubt den Benutzern, eine Webseite auszufüllen und abzuschicken. Es verfolgt dann die Anfrage von der Client-Arbeitsstation durch das Netzwerk. Dies geschieht durch Instrumentierung der SOAP-Nachricht. Die Nachricht wird zu dem Webdienst zurückverfolgt, der sie verarbeitet. Wenn dieser Webdienst einen anderen Webdienst aufruft, wird der Link zu diesem Dienst verfolgt. Der Inhalt jeder WSDL-Schnittstelle wird aufgezeichnet und in einer Trace-Datei gespeichert. Auf diese Weise ist der Tester in der Lage, den Weg der Webservice-Anfrage durch die Architektur zu verfolgen und die Nachrichteninhalte in verschiedenen Phasen der Verarbeitung zu untersuchen 10.

Parasoft bietet eine ähnliche Lösung an, allerdings werden die Anfragen nicht von einem Web-Client gestartet, sondern aus den in BPEL geschriebenen Geschäftsprozessprozeduren generiert. Dadurch wird einerseits ein größeres Datenvolumen erzeugt, andererseits werden reale Bedingungen simuliert. Es wird erwartet, dass die meisten Anfragen für Web-Services von den Geschäftsprozess-Skripten kommen, die die Geschäftsprozesse steuern. Die BPEL-Sprache wurde für diesen Zweck entwickelt, also ist es naheliegend, damit zu testen. Was in der Parasoft-Lösung fehlt, ist die Möglichkeit, die Antworten zu überprüfen. Sie müssen visuell inspiziert werden 11.

Einer der Pioniere im Bereich Web-Tests ist die Firma Empirix. Das Tool eTester von Empirix ermöglicht es Testern, die Geschäftsprozesse über die Web-Clients zu simulieren. Ihre Anfragen werden aufgezeichnet und in Testskripte übersetzt. Die Tester können dann die Skripte verändern und variieren, um eine Anfrage in mehrere Varianten zu mutieren und so einen umfassenden Funktionstest durchzuführen. Die Skripte sind in Visual Basic for Applications geschrieben, so dass jede Person, die mit VB vertraut ist, leicht mit ihnen arbeiten kann. Mit den Skripten ist es weiterhin möglich, die Antwort-Ergebnisse mit den erwarteten Ergebnissen zu verifizieren. Unerwartete Ergebnisse werden aussortiert und dem Fehlermeldesystem zugeführt 12.

Andere Testfirmen wie Software Research Associates, Logica und Compuware arbeiten an ähnlichen Ansätzen, so dass es nur eine Frage der Zeit ist, bis der Markt mit Web-Service-Testing-Tools überschwemmt ist. Danach wird es noch einige Zeit dauern, bis das gewünschte Qualitätsniveau der Tools erreicht ist. Bis das der Fall ist, gibt es noch ein gewisses Potenzial für maßgeschneiderte Lösungen, wie sie in diesem Beitrag beschrieben werden. Die verschiedenen Ansätze zur Testautomatisierung werden von Graham und Fewster behandelt 13.

Der WSDLTest-Ansatz

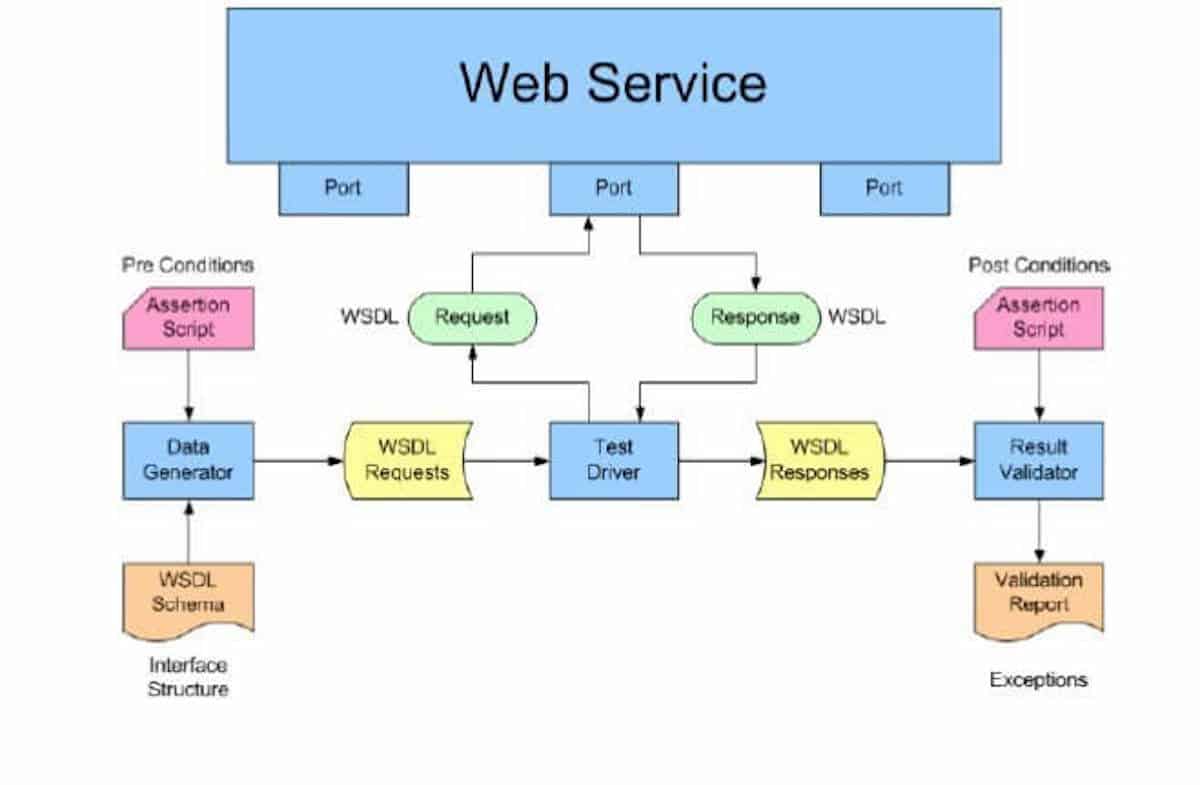

Das WSDLTest-Tool verfolgt einen etwas anderen Ansatz als die anderen kommerziellen Tools zum Testen von Webdiensten. Es basiert auf dem Schema der WSDL-Beschreibung, d. h. es beginnt mit einer statischen Analyse des Schemas. Aus diesem Schema werden zwei Objekte generiert. Das eine ist eine Vorlage für eine Dienstanforderung. Das andere ist ein Testskript. Das Testskript ermöglicht es dem Benutzer, die Argumente in der Web-Service-Anforderungsvorlage zu manipulieren. Außerdem ermöglicht es dem Benutzer, die Ergebnisse in der Webserver-Antwort zu überprüfen. Der Testtreiber ist ein separates Tool, das die Webdienstanfragen liest und versendet und die Webdienstantworten empfängt und speichert.

Die Motivation für die Entwicklung dieses Tools

Oft sind es die Umstände eines Projekts, die die Entwicklung eines Tools motivieren. In diesem Fall bestand das Projekt darin, eine E-Government-Website zu testen. Der allgemeine Benutzer, der Bürger, sollte über die Standard-Web-Benutzeroberfläche auf die Website zugreifen. Die lokalen Behörden verfügten jedoch über IT-Systeme, die ebenfalls auf die Website zugreifen mussten, um Informationen aus der zentralen staatlichen Datenbank zu erhalten. Zu diesem Zweck wurde beschlossen, ihnen eine Web-Service-Schnittstelle anzubieten. Insgesamt wurden neun verschiedene Dienste definiert, jeder mit eigenen Anfrage- und Antwortformaten.

Die Benutzerschnittstelle zur eGovernment-Website wurde manuell von menschlichen Testern getestet, die das Verhalten potenzieller Benutzer simulierten. Für die Webservices wurde ein Werkzeug benötigt, das das Verhalten der Benutzerprogramme simuliert, indem es automatisch typische Anfragen generiert und an den Webservice übermittelt. Da die Antworten des Webdienstes nicht ohne weiteres sichtbar sind, war es auch notwendig, die Antworten automatisch zu validieren. Die Motivation für die Entwicklung des Werkzeugs lässt sich also wie folgt zusammenfassen:

- Web Services können nicht vertrauenswürdig sein, daher müssen sie intensiv getestet werden

- Alle Anfragen mit allen repräsentativen Kombinationen von Argumenten sollten getestet werden

- Alle Antworten mit allen repräsentativen Ergebniszuständen sollten validiert werden

- Zum Testen von Web Services ist es notwendig, WSDL-Anfragen mit spezifischen Argumenten zur Überprüfung der Zielfunktionen zu generieren

- Um die Korrektheit von Web Services zu überprüfen, ist es notwendig, die WSDL-Antworten gegen die erwarteten Ergebnisse zu validieren

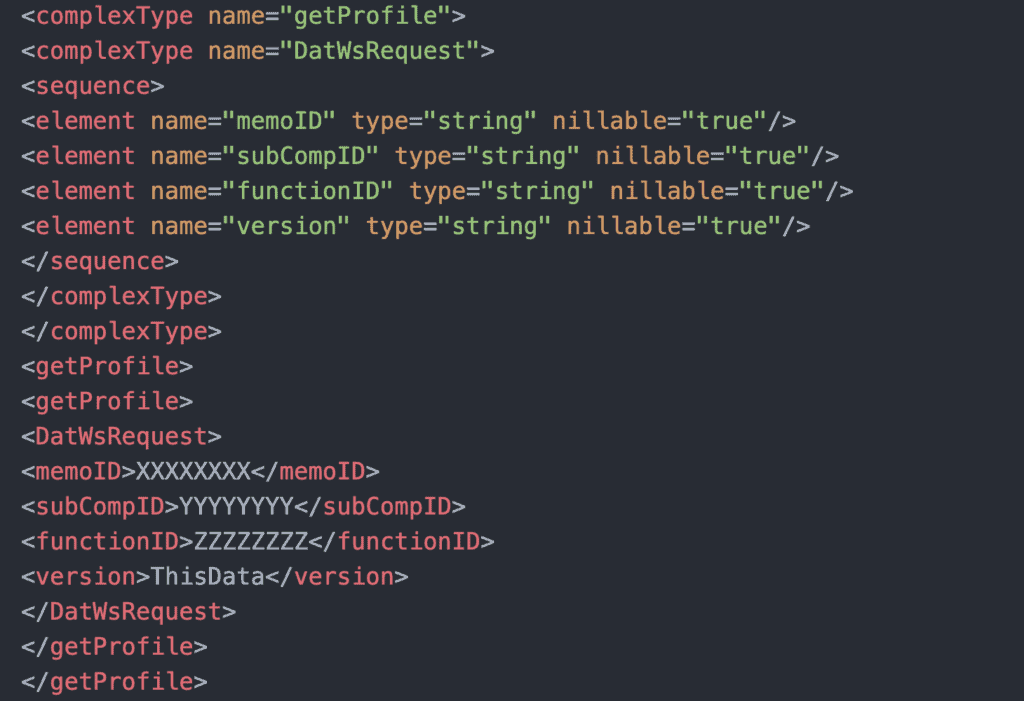

Generieren einer Vorlagenanforderung aus dem WSDL-Schema

Alle Tests sind ein Test gegen etwas. Es muss eine Quelle für die Testdaten geben und es muss ein Orakel geben, gegen das die Testergebnisse verglichen werden können 14. Im Fall von WSDLTest ist das Orakel das WSDL-Schema. Dieses Schema wird entweder automatisch aus dem Schnittstellendesign generiert oder es wird manuell vom Entwickler geschrieben. Als dritte und weitergehende Alternative kann es aus der BPEL-Prozessbeschreibung erstellt werden. Unabhängig davon, wie es erstellt wird, definiert das Schema die grundlegenden komplexen Datentypen in Übereinstimmung mit den Regeln des XML-Schema-Standards. Komplexe Datentypen können andere komplexe Datentypen einschließen, sodass der Datenbaum mit einfachem und mehrfachem Vorkommen der Baumknoten dargestellt wird. Das Schema definiert dann die Basisknoten, d.h. die Spitzen des Baums und deren Reihenfolge. Dies sind die eigentlichen Parameter. Das folgende Beispiel ist ein Auszug aus den Typdefinitionen eines eGovernment Webservice-Schemas

Nach der Parameterbeschreibung folgen die Nachrichtenbeschreibungen, die die Namen und die Bestandteile jeder Nachricht identifizieren, egal ob es sich um eine Anfrage oder eine Antwort handelt. Danach folgen die Porttyp-Definitionen. Jede aufzurufende Dienstoperation wird mit den Namen ihrer Eingangs- und Ausgangsnachrichten aufgeführt. Diese Nachrichtennamen sind Referenzen auf die zuvor definierten Nachrichten, die wiederum Referenzen auf die zuvor definierten Parameter sind. Nach den Port-Typen folgen die Bindings, die die aus Service-Operationen zusammengesetzten SOAP-Prototypen beschreiben. Ein WSDL-Schema ist eine Baumstruktur, in der die SOAP-Prototypen auf die Service-Operationen verweisen, die wiederum auf die logischen Nachrichten verweisen, die wiederum auf die Parameter verweisen, die wiederum auf die verschiedenen Datentypen verweisen. Die Datentypen können sich wiederum gegenseitig referenzieren. Das Parsen dieses Baumes wird Tree Walking genannt 15. Der Parser wählt einen obersten Knoten aus und folgt ihm durch alle seine Zweige, wobei er alle untergeordneten Knoten auf dem Weg nach unten sammelt. Am Ende jedes Zweiges findet er die grundlegenden Datentypen wie Integer, Booleans und Strings. WSDLTest geht noch einen Schritt weiter, indem es jedem Grunddatentyp einen Satz repräsentativer Datenwerte zuweist. So wird z. B. Integer-Werten ein Bereich von 0 bis 10000 und String-Werten unterschiedliche Zeichenkombinationen zugewiesen. Diese repräsentativen Datensätze werden in Tabellen gespeichert und können vom Benutzer vor der Generierung der Testdaten bearbeitet werden. Die vollständige WSDL-Struktur der WSDL-Schnittstelle sieht also wie folgt aus:

Web service interface SOAP prototypes service operations logical messages parameters data types elementary data types representative values

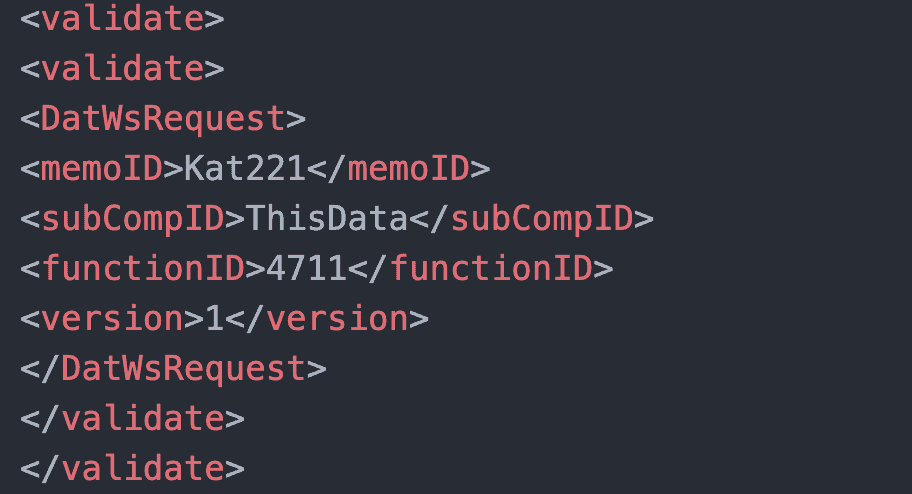

Die Aufgabe des Datengenerators besteht darin, den WSDL-Schemabaum bis auf die Ebene der Basisdatentypen zu durchlaufen und repräsentative Werte für diesen Typ auszuwählen. Die Werte werden zufällig aus der Menge der möglichen Werte ausgewählt. Aus diesen Werten wird eine XML-Datengruppe wie folgt erstellt:

Nutzen Sie die 7 Erfolgsfaktoren des Software-Testens

Steigern Sie die Effizienz Ihres Software-Tests und die Qualität ihrer Entwicklung.

Auf diese Weise wird eine WSDL-Dienstanforderungsdatei mit Beispieldaten generiert und für die zukünftige Verwendung gespeichert. Gleichzeitig wird ein Testskript erstellt, das es dem Tester erlaubt, die ursprünglich generierten Werte zu überschreiben. Dieses Skript ist eine Vorlage mit den Datenelementnamen und deren Werten.

Durch die Änderung des Skripts kann der Tester nun die Werte der Service-Anfrage ändern. Da auch für die Ausgabedaten ein Skript generiert wird, erhält der Tester eine Vorlage für die Verifizierung der Service-Antworten.

Wir sehen hier das Ergebnis der Schemaanalyse, nämlich eine WSDL-Vorlage, die als Eingabe für die endgültige WSDL-Testdatengenerierung dient. Eine WSDL-Anfrage ist eine kaskadierende Datenstruktur, die mit Typdefinitionen beginnt, auf die sich die Nachrichten-Definitionen beziehen, auf die sich die einem Port zugeordneten Ein-/Ausgabeoperationen beziehen. Da der größte Teil des Codes, wie z. B. derjenige, der die Ports und die SOAP-Container definiert, rein technischer Natur ist und sich zudem in hohem Maße wiederholt, ist es praktisch, eine WSDL-Vorlage zu haben, die man kopieren und an die jeweilige Web-Service-Anfrage anpassen kann.

Schreiben von Vorbedingungs-Assertionen

Die Testskripte für WSDLTest sind Sequenzen von Pre-Condition-Assertions, die mögliche Zustände der Web-Service-Anforderung definieren. Es ist zu beachten, dass die gleiche Assertion-Skriptsprache auch für das Testen anderer Datentypen wie relationale Datenbanken, XML-Dateien und Textdateien verwendet wird. Ein Zustand ist eine Kombination von vorgegebenen Werten für die in der WSDL-Schnittstellendefinition angegebenen Datentypen. Die Werte sind den einzelnen Datenelementen zugeordnet, ihre Zuordnung kann jedoch voneinander abhängig sein, so dass eine bestimmte Kombination von Werten z. B. durch den Tester bestimmt werden kann:

assert new.Account_Status = "y" if(old.Account_Balance < "0");

Für die Zuweisung von Testdatenwerten gibt es sechs verschiedene Assertionstypen:

- die Zuweisung eines anderen vorhandenen Datenwertes von der gleichen Schnittstelle

- die Zuweisung eines konstanten Wertes

- die Zuweisung eines Satzes von Alternativwerten

- die Zuweisung eines Wertebereichs

- die Zuweisung eines verketteten Wertes

- die Zuweisung eines berechneten Wertes

Die Zuweisung eines anderen vorhandenen Datenwertes erfolgt durch Verweis auf diesen Wert. Der Wert, auf den verwiesen wird, muss innerhalb der gleichen WSDL liegen.

assert new.Account_Owner = old.Customer_Name;

Die Zuweisung eines konstanten Wertes erfolgt durch Angabe des Wertes als Literal in dieser Anweisung. Alle Literale werden in Anführungszeichen gesetzt.

assert new.Account_Balance = "0";

Die Zuordnung eines Satzes von Alternativwerten erfolgt durch eine Aufzählung. Die Aufzählungswerte werden durch ein Oder-Zeichen “!” getrennt.

assert new.Account_Status = "0" ! "1" ! "2" ! "3";

Entsprechend dieser Behauptung werden die Werte abwechselnd beginnend mit 0 zugewiesen. Das erste Kontovorkommen hat den Status 0, das zweite den Status 1, das dritte den Status 2 usw.

Die Zuweisung eines Wertebereichs dient der Grenzwertanalyse. Sie erfolgt durch Angabe der unteren und oberen Grenze eines numerischen Bereichs.

assert new.Account_Status = ["1" : "5"];

Dies bewirkt die Zuweisung der Werte 0, 1, 2, 4, 5, 6 in abwechselnder Reihenfolge. Das erste Vorkommen eines Kontos hat den Status 0, das zweite 1, das dritte 2, das vierte 4, das fünfte 5 und das sechste 6.

Die Zuweisung eines verketteten Wertes erfolgt durch das Verbinden von zwei oder mehr vorhandenen Werten mit zwei oder mehr konstanten Werten in einem einzigen String.

assert new.Account_Owner =

"Mr. " | Customer_Name | " from " | Customer_City;

Die Zuweisung eines berechneten Wertes wird als arithmetischer Ausdruck angegeben, in dem die Argumente vorhandene Werte oder Konstanten sein können

assert new.Account_Balance = old.Account_Balance / "2" + "1";

Die Assert-Zuweisungen können bedingt oder unbedingte sein. Wenn sie bedingt sind, folgt ein logischer Ausdruck, der eine Datenvariable der WSDL-Schnittstelle mit einer anderen Datenvariablen der gleichen Schnittstelle oder mit einem konstanten Wert vergleicht.

assert new.Account_Owner = "Smith"

if(old.Account_Number = "100922" &

old.Account_Balance > "1000");

Der Tester passt die Assertion-Anweisungen in dem aus dem WSDL-Skript generierten Skript so an, dass ein repräsentatives Testdatenprofil mit Äquivalenzklassen und Grenzwertanalyse sowie progressiven und degressiven Wertefolgen entsteht. Ziel ist es, die Eingabedaten so zu manipulieren, dass ein breites Spektrum an repräsentativen Service-Anfragen getestet werden kann. Um dies zu erreichen, sollten die Tester wissen, was der Webservice tun soll und die Datenwerte entsprechend zuordnen.

file: DAT-WS;

if (object = "DatWsRequest");

assert new.memoID = old.memoID;

assert new.subCompID = old.subCompID;

assert new.functionID = "4711";

assert new.version = "1";

endObject;

if (object = "DatWSProfile");

assert new.attributeName = old.attributeName;

assert new.typ = 21 ! 22 ! 23;

assert new.value = "Sneed";

endObject;

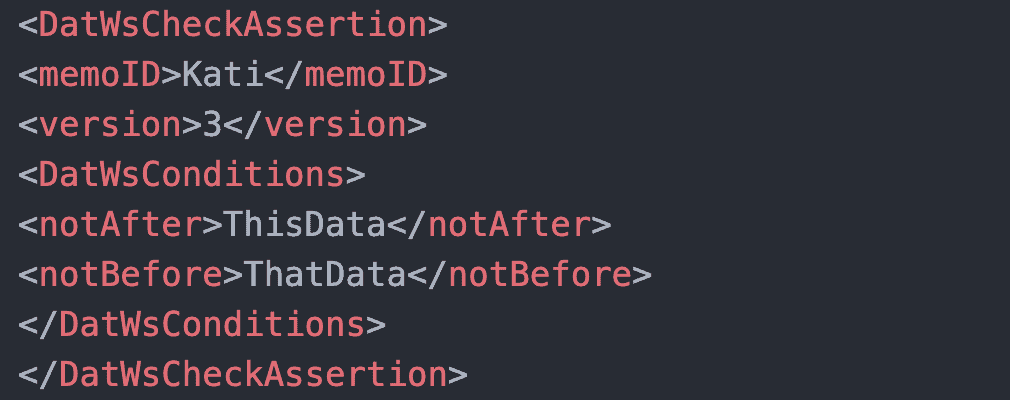

if (object = "DatWsCheckAssertion");

assert new.memoID = "Kati";

assert new.version = "3";

assert new.notAfter = "ThisData";

assert new.notBefore = "ThatData";

endObject;

end;

Überschreiben der Vorlagedaten

Sobald die Assertion-Skripte verfügbar sind, ist es möglich, die Vorlage einer Webservice-Anfrage mit den assertierten Daten zu überschreiben. Dies ist die Aufgabe des Moduls XMLGen. Es gleicht die vom WSDLGen-Modul generierte WSDL-Datei mit dem vom Tester geschriebenen Assertion-Skript ab. Die Datennamen im Assertion-Skript werden gegen das WSDL-Schema geprüft und die Assertions in Symboltabellen kompiliert. Es gibt verschiedene Tabellen für die Konstanten, die Variablen, die Zuweisungen und die Bedingungen.

Nachdem das Assertion-Skript kompiliert wurde, wird die entsprechende WSDL-Datei gelesen und die Datenwerte werden durch die aus den Assertionen abgeleiteten Werte ersetzt. Wenn sich die Assertion auf eine Konstante bezieht, ersetzt die Konstante den vorhandenen Wert des XML-Datenelements mit dem Namen, der dem in der Assertion entspricht. Wenn sich die Assertion auf eine Variable bezieht, wird der Wert des XML-Datenelements mit diesem Variablennamen in das Zieldatenelement verschoben. Alternativwerte werden nacheinander in aufsteigender Reihenfolge zugewiesen, bis der letzte Wert erreicht ist, dann beginnt es wieder mit dem ersten Wert. Bereichswerte werden als die Grenzwerte plus und minus eins zugewiesen.

Am Ende liegt eine Sequenz von Web-Service-Anfragen mit unterschiedlichen repräsentativen Zuständen vor, die die ursprünglich generierten Daten mit den durch die Assertionsskripte zugewiesenen Daten kombiniert. Durch Änderung der Assertionen kann der Tester die Zustände der Requests verändern und so eine maximale Datenabdeckung sicherstellen.

Aktivieren der Web Services

Nachdem die Webservice-Anforderungen generiert wurden, ist es nun möglich, sie an den Server zu senden. Dies ist die Aufgabe des WS-Testtreibers. Er ist ein simulierter BPEL-Prozess mit einem Schleifenkonstrukt. Die Schleife wird durch eine Liste von Webdiensten gesteuert, die in der Reihenfolge angeordnet sind, in der sie aufgerufen werden sollen. Der Tester kann die Liste bearbeiten, um die Reihenfolge entsprechend den Testanforderungen zu ändern.

Aus der Liste entnimmt der Testtreiber den Namen des nächsten aufzurufenden Webdienstes. Er liest dann die generierte WSDL-Datei mit dem Namen dieses Webdienstes und sendet eine Reihe von Anforderungen an den angegebenen Dienst. Beim Testen im synchronen Modus wird gewartet, bis eine Antwort empfangen wird, bevor die nächste Anforderung versendet wird. Beim Testen im asynchronen Modus werden mehrere Anforderungen gesendet, bis ein Wartebefehl in der Webdienstliste angezeigt wird. An diesem Punkt wartet er, bis er Antworten für alle versendeten Dienste erhalten hat, bevor er mit der nächsten Anforderung fortfährt.

Die Antworten werden angenommen und in separaten Antwortdateien gespeichert, um später von einem Postprozessor überprüft zu werden. Es ist nicht die Aufgabe des Treibers, Anforderungen zu erstellen oder die Antworten zu überprüfen. Die Aufträge werden vom Präprozessor erstellt. Der Treiber sendet sie nur aus. Die Antworten werden durch den Postprozessor geprüft. Der Treiber speichert sie nur. Auf diese Weise wird die Rolle des Testtreibers auf die eines einfachen Dispatchers reduziert. BPEL-Prozeduren sind dafür sehr gut geeignet, da sie über alle notwendigen Eigenschaften verfügen, um Web-Services innerhalb eines vordefinierten Workflows aufzurufen.

Schreiben von Post-Condition-Assertions

Für die Verifizierung der Web-Service-Antworten wird die gleiche Assertionssprache verwendet wie für die Konstruktion der Web-Service-Anfragen. Nur haben hier die Assertionen eine umgekehrte Bedeutung. Daten werden nicht aus vorhandenen Variablen und Konstanten zugewiesen, sondern mit den Datenwerten vorheriger Antworten oder mit konstanten Werten verglichen.

Der Vergleich mit einem anderen vorhandenen Datenwert erfolgt durch Bezugnahme auf diesen Wert.

assert new.Account_Owner = old.Account_Owner;

Der Vergleich mit einem konstanten Wert erfolgt, indem der Wert als Literal in dieser Anweisung angegeben wird. Wie bei der Zuweisung werden die Literale in Anführungszeichen gesetzt.

assert new.Account_Balance = "33.50";

Um zu prüfen, ob die Antwortdaten mit mindestens einem Wert aus einer Liste von Werten übereinstimmen, wird die Alternate-Assertion verwendet.

assert new.Account_Status = "0" ! "1" ! "2" ! "3" ;

Eine Assertion kann auch prüfen, ob die Antwortdaten innerhalb eines Wertebereichs liegen (z. B. zwischen 100 und 500).

assert new.Account_Balance = ["100.00" : "500.00"];

Die Assertion zum Vergleichen kann auch berechnete Werte umfassen. Zuerst wird der Ausdruck ausgewertet, dann werden wieder die Antwortdaten geprüft.

assert new.Account_Balance = old.Account_Balance – "50";

Wie bei den Vorbedingungen können auch die Nachbedingungen unkonditional oder konditional sein. Wenn sie bedingt sind, werden sie durch einen logischen if-Ausdruck qualifiziert. Der Ausdruck kann einen Vergleich von zwei Variablen in der Webservice-Antwort oder einer Variablen in der Antwort mit einem konstanten Wert enthalten.

assert new.Account_Status = "3"

if(old.Account_Balance < "1000");

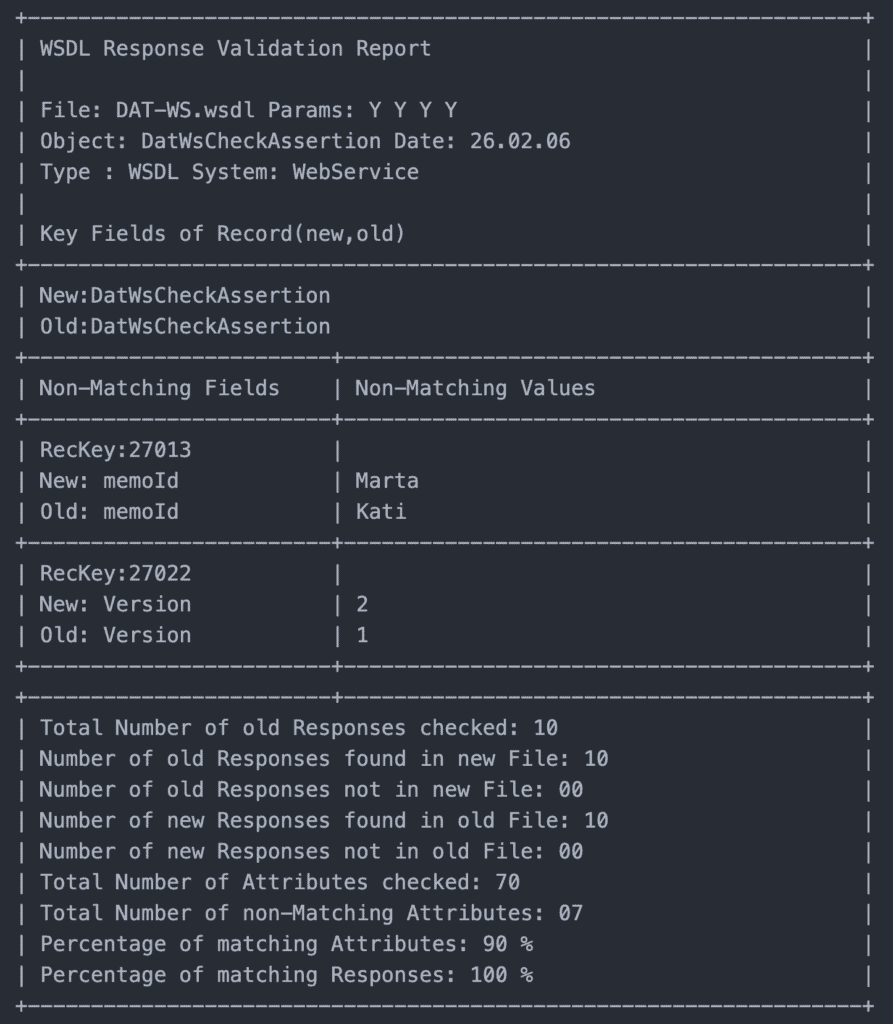

In allen Fällen, in denen die Assertion nicht wahr ist, wird eine Fehlermeldung aufgezeichnet, die sowohl den erwarteten als auch den tatsächlichen Wert anzeigt. Vorausgesetzt, es gibt eine Assertion-Prüfung für jedes Attribut der WSDL-Antwort, beträgt die Überprüfung der Antwort 100 %. Es ist jedoch nicht erforderlich, jedes Attribut zu prüfen. Der Tester kann sich entscheiden, die Prüfung auf kritische Variablen zu beschränken. Wenn dies der Fall ist, ist die Datenabdeckung geringer. Die Datenabdeckung wird anhand der Anzahl der bestätigten Ergebnisse im Verhältnis zur Summe aller Ergebnisse gemessen.

Validierung der Antworten

Das Modul XMLVal erfüllt die Aufgabe, die Ergebnisse des Webservice zu verifizieren. Dazu muss es zunächst die Post Condition Assertions in interne Tabellen mit Variablenreferenzen, Konstanten, Aufzählungen und Bereichen übersetzen. Dabei prüft es die Datennamen und -typen gegen die im WSDL-Schema deklarierten Namen und Typen, um die Konsistenz sicherzustellen.

Nachdem es gelungen ist, die Assertion-Skripte zu kompilieren, verwendet das Tool die kompilierte Tabelle, um die Antwort des Webdienstes zu überprüfen. Zunächst werden die erwarteten Werte in einer Tabelle mit einem Schlüssel für jedes Objektvorkommen gespeichert. Zweitens parst es die WSDL-Ergebnisdatei und gleicht die dortigen Objekte mit den Objekten in den Assertionstabellen ab. Wenn eine Übereinstimmung gefunden wird, werden die Attribute dieses Objekts extrahiert und ihre Werte mit den erwarteten Werten verglichen. Wenn sie nicht mit der Verifizierungsbedingung übereinstimmen, werden die Datennamen und Werte in einer Liste der nicht übereinstimmenden Ergebnisse ausgegeben. Es ist dann die Aufgabe des Testers, herauszufinden, warum die Ergebnisse nicht übereinstimmen. Zusätzlich zur Auflistung der Assertion-Verletzungen erstellt das XMLVal-Tool auch einige Statistiken über den Grad der Datenabdeckung und den Grad der Korrektheit.

Bestandteile des WSDLTest-Tools

Das WSDLTest-Tool wurde aus mehreren Komponenten zusammengesetzt. Diese sind:

- GUI Shell

- XML File Writer

- XML File Reader

- Assertion Compiler

- XSD Tree Walker

- WSDL Analyzer

- Table Processor

- Error Handler

- Random Request Data Generator

- Selective Request Generator Request Validator

- Validation Report Generator

- WS Test Driver

Einige dieser Kompoenten wurden von einem bestehenden Werkzeug übernommen, andere wurden neu entwickelt. Das Konzept der XML Verarbeitung ist veröffentlicht 16 und kann wiederverwendet werden. Die Assertion-Sprache und der Assertion-Compiler wurden von einem früheren Tool übernommen und erweitert. So konnte eine Prototypversion in weniger als einem Monat einsatzbereit gemacht werden. Später wurde das Tool verfeinert und erweitert. In seiner ursprünglichen Form war das Tool wie folgt aufgebaut:

- Die Shell ist mit Borland Delphi implementiert

- Der Kern ist mit Borland C++ implementiert

- Das Tool verwendet keine Datenbank, sondern nur temporäre Arbeitsdateien

- Das Tool wurde für den Betrieb in einer MS-Windows-Umgebung oder einer entsprechenden Umgebung entwickelt

- Die Verbindung zwischen Shell und Core erfolgt über eine XML-Parameterdatei

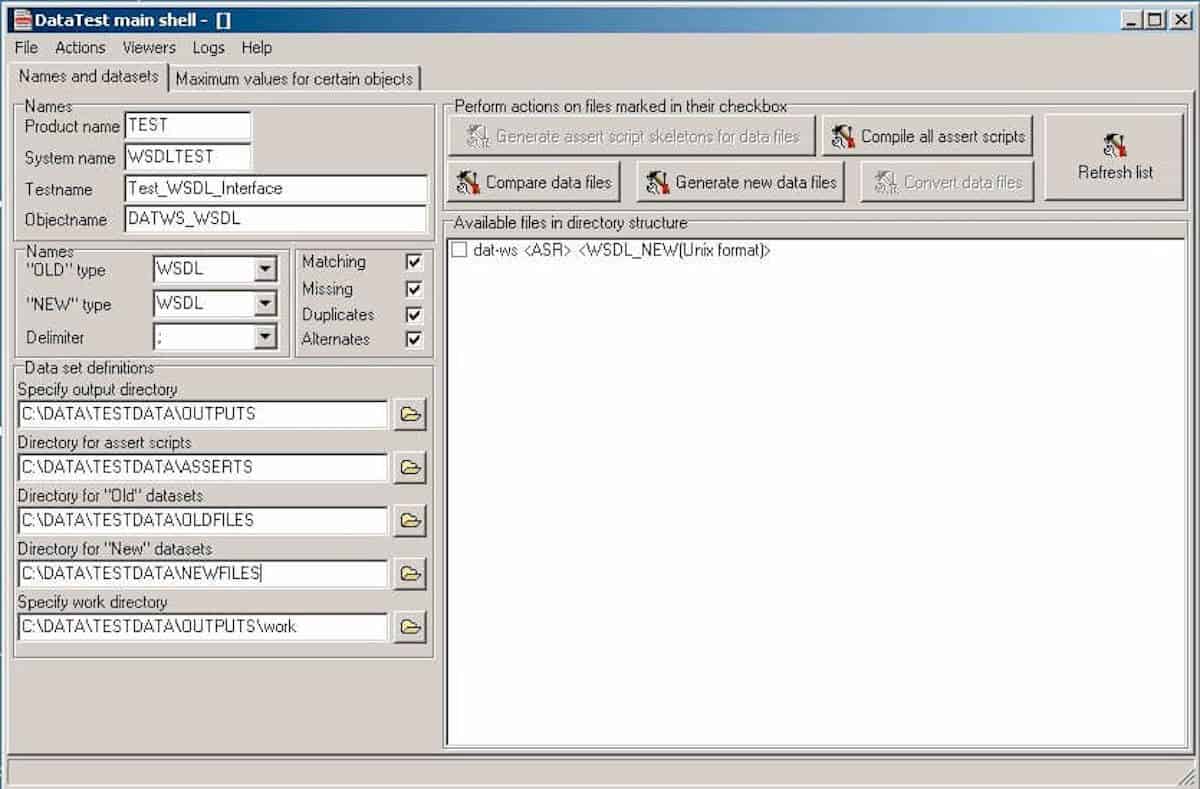

Die Benutzeroberfläche

Die Windows-Oberfläche von WSDLTest ist so konzipiert, dass sie Parameter vom Benutzer entgegennimmt, Dateien aus einem Verzeichnis auswählt und die Backend-Prozesse aufruft

Die drei Verzeichnisse, aus denen Dateien ausgewählt werden können, sind:

- das Assertion-Verzeichnis mit den Assertion-Textdateien

- das alte Dateiverzeichnis mit den Testeingaben – csv-, sql-, xml- und wsdl-Dateien

- das neue Dateiverzeichnis mit den Testausgaben – csv-, xml- und wsdl-Dateien

Zusätzlich gibt es ein Ausgabeverzeichnis, in dem die Protokolle und Berichte gesammelt werden. Es ist wichtig, dass die Dateinamen in allen vier Verzeichnissen gleich sind, da sie auf diese Weise miteinander verbunden werden. Lediglich die Erweiterung darf variieren. So wird die tatsächliche Antwort “Message.wsdl” mit der erwarteten Antwort “Message.wsdl” unter Verwendung des Assertion-Skripts “Message.asr” verglichen, um den Report “Message.rep” zu erzeugen. Die Assertion-Skripte haben die Endung .asr. Die Namen aller Dateien, die zu einem bestimmten Projekt gehören, werden zusammen mit ihren Typen angezeigt, damit der Benutzer sie auswählen kann.

Der Assertion-Compiler

Der Assertion-Compiler wurde entwickelt, um die Assertion-Skripte zu lesen und sie in interne Tabellen zu übersetzen, die zur Testzeit interpretiert werden können. Für jede Datei bzw. jedes Objekt werden insgesamt neun Tabellen erzeugt. Diese sind:

- Eine Kopftabelle mit Informationen über das Prüfobjekt

- Eine Schlüsseltabelle mit einem Eintrag für bis zu 10 Schlüssel, die verwendet werden, um die alten und neuen Dateien in Beziehung zu setzen

- Eine Assertionstabelle mit einem Eintrag für bis zu 80 Assertion Statements

- Eine Bedingungstabelle mit einem Eintrag für jede zu erfüllende Vorbedingung

- Eine Konstantentabelle mit einem Eintrag für jeden zu vergleichenden oder zu erzeugenden Konstantenwert

- Eine Alternativentabelle mit einem Eintrag für jeden alternativen Wert, den ein Attribut haben kann

- Eine Verkettungstabelle mit einem Eintrag für jeden verketteten Wert

- Eine Rechentabelle mit den Operanden und Operatoren der arithmetischen Ausdrücke

- Eine Ersetzungstabelle mit bis zu 20 Feldern, deren Werte durch andere Werte ersetzt werden können

Die Assertion-Kompilierung muss erfolgen, bevor eine Datei generiert oder validiert werden kann. Es ist natürlich möglich, viele Assertion-Skripte auf einmal zu kompilieren, bevor mit der Generierung und Validierung von Dateien begonnen wird. Die Ergebnisse der Assertionskompilierung werden in einer Protokolldatei ausgeschrieben, die dem Benutzer am Ende jedes Kompilierungslaufs angezeigt wird.

Der WSDL-Request-Generator

Der Anforderungsgenerator besteht aus drei Komponenten:

- Schema-Präprozessor

- Zufallsgenerator für Anfragen

- Datenzuweiser

XML-Schemas werden normalerweise von einem Tool generiert. Dies führt dazu, dass Tags mit vielen Attributen zusammen in eine Zeile geschrieben werden. Um den XML-Text besser lesbar und einfacher zu parsen zu machen, teilt der Schema-Präprozessor solche langen Zeilen in viele eingerückte kurze Zeilen auf. Dies vereinfacht die Verarbeitung des Schemas durch die nachfolgenden Komponenten.

Der Zufallsgenerator für Anfragen erzeugt dann eine Reihe von WSDL-Anfragen mit konstanten Werten als Daten. Die Zuweisung von Konstanten ist abhängig vom Elementtyp. String-Daten werden aus repräsentativen Strings zugewiesen, numerische Daten durch Addition oder Subtraktion von konstanten Intervallen. Datums- und Zeitwerte werden aus der Uhr übernommen. Innerhalb der Anfrage können die Daten als Variable zwischen den Tags eines Elements oder als Attribut zu einem Element angegeben werden. Dies stellt ein Problem dar, da Attribute keine Typen haben. Sie sind per Definition Strings. Hier werden also nur String-Werte zugewiesen. Das Endergebnis ist eine Anfrage im XML-Format mit zufällig zugewiesenen Datenelementen und Attributen.

Der Datenzuweiser liest die zufällig erzeugten WSDL-Anfragen ein und schreibt dieselben Anfragen mit angepassten Datenvariablen wieder aus. In den Assertion-Skripten ordnet der Anwender den Request-Elementen bestimmte Argumente namentlich zu. Beim Kompilieren der Assertion-Skripte werden die Namen und Argumente in Symboltabellen gespeichert. Für jedes Datenelement der Anforderung greift der Datenzuweiser auf die Symboltabellen zu und prüft, ob diesem Datenelement ein Assertionswert oder eine Wertemenge zugewiesen wurde. Wenn ja, wird der Zufallswert dieses Elements durch den zugesicherten Wert überschrieben. Wenn nicht, bleibt der Zufallswert erhalten. Auf diese Weise werden die WSDL-Anfragen zu einer Mischung aus Zufallswerten, repräsentativen Werten und Grenzwerten. Über die Assertions kann der Tester steuern, welche Argumente an den Webdienst gesendet werden. Die WSDL-Anfragen werden in einer temporären Datei gespeichert, wo sie dem Request Dispatcher zur Verfügung stehen. Zur Unterscheidung der Anfragen wird jeder Anfrage ein Testfall-Identifikator als eindeutiger Schlüssel zugewiesen.

Der WSDL-Antwort-Validator

Der Response Validator besteht nur aus zwei Komponenten:

- Response-Präprozessor

- Daten-Checker

Der Antwort-Präprozessor funktioniert auf ähnliche Weise wie der Schema-Präprozessor. Er entwirrt die Antworten, die vom Webdienst zurückkommen, damit sie vom Data Checker leichter verarbeitet werden können. Die Antworten werden der Warteschlangendatei des Test-Dispatchers entnommen.

Der Data Checker liest die Antworten und identifiziert die Ergebnisse entweder anhand ihres Tags oder ihres Attributnamens. Jeder Tag- oder Attributname wird gegen die Datennamen in der Ausgabe-Assertion-Tabelle geprüft. Wenn eine Übereinstimmung gefunden wird, wird der Wert dieses Elements oder Attributs mit dem behaupteten Wert verglichen. Wenn der tatsächliche Wert vom behaupteten Wert abweicht, wird eine Diskrepanz im Antwortvalidierungsbericht gemeldet. Dies wird für jede Antwort wiederholt.

Um zwischen Antworten zu unterscheiden, wird die der Anfrage zugewiesene Testfall-Kennung auch an die Antwort vererbt, so dass jede Antwort mit einer bestimmten Anfrage verknüpft ist. Dies ermöglicht es dem Tester, die Testfallkennung in die Assertions aufzunehmen und Antworten auf der Grundlage des Testfalls zu vergleichen.

Der WSDL-Request-Dispatcher

Der WSDL-Request-Dispatcher ist die einzige Java-Komponente. Er nimmt die generierten Anfragen aus der Eingabe-Warteschlangendatei, packt sie in einen SOAP-Umschlag und sendet sie an den gewünschten Webdienst. Wenn es ein Problem beim Erreichen des Webdienstes gibt, behandelt er die Ausnahme. Andernfalls zeichnet es die Ausnahmen einfach auf und fährt mit der nächsten Anforderung fort. Die Antworten werden aus dem SOAP-Umschlag entnommen und in der Datei der Ausgabewarteschlange gespeichert, wo sie durch die Testfallkennung eindeutig identifiziert werden.

Der Anforderungsdispatcher zeichnet auch die Zeit auf, zu der jeder Testfall versendet wird und die Zeit, zu der die Antwort zurückgegeben wird. Dies sind dann die Start- und Endzeitpunkte des jeweiligen Testfalls. Durch die Instrumentierung der Methoden der Webservices mit Sonden, die den Zeitpunkt ihrer Ausführung aufzeichnen, ist es möglich, bestimmte Methoden mit bestimmten Testfällen zu verknüpfen. Dies erleichtert die Identifizierung von Fehlern in den Webservices. Es ermöglicht auch die Identifizierung bestimmter Methoden, die auf dem Weg eines Dienstes durch einen oder mehrere Webservices durchlaufen werden. Dies ist ein Thema für zukünftige Forschung 17.

Erfahrung mit dem WSDLTest-Tool

Die Erfahrungen mit WSDLTest in einem eGovernment-Projekt sind ermutigend. Dort wurden neun verschiedene Webdienste mit durchschnittlich 22 Anfragen pro Dienst getestet. Insgesamt wurden 47 verschiedene Antworten verifiziert. Von diesen enthielten 19 mindestens ein fehlerhaftes Ergebnis. Von den mehr als 450 Fehlern, die im Gesamtprojekt gefunden wurden, wurden also etwa 23 im Test der Webdienste entdeckt 18. Es ist schwer zu sagen, wie viele Fehler beim Testen auf andere Weise hätten gefunden werden können. Um die Effizienz des einen Ansatzes zu beurteilen, müsste man ihn mit einem anderen vergleichen. Was man sagen kann, ist, dass der Test relativ billig war und nicht mehr als zwei Wochen Aufwand für die Tester gekostet hat.

Es scheint, dass das Tool ein geeignetes Instrument für den Test von Webservices werden könnte, solange die WSDL-Schnittstellen nicht zu komplex sind. Wenn sie zu komplex sind, wird die Aufgabe, die Assertions zu schreiben, zu schwierig und es kommt zu Fehlern. An dieser Stelle kann der Tester nicht sicher sein, ob ein beobachteter Fehler durch den Webservice oder durch eine falsch formulierte Assertion verursacht wird. Eine ähnliche Erfahrung wurde vor etwa 30 Jahren aus dem U.S. ballistischen Raketenabwehrprojekt berichtet. Dort waren etwa 40 % der gemeldeten Fehler tatsächlich Fehler in den Testverfahren 19. Wenn man sich die Testtechnologie ansieht, die in diesem Projekt vom RXVP-Testlabor verwendet wurde, stellt man fest, dass sich die grundlegenden Testmethoden – das Setzen von Vorbedingungen, die Überprüfung von Nachbedingungen, die Instrumentierung der Software, die Überwachung der Testpfade und die Messung der Testabdeckung – seit den 70er Jahren kaum verändert haben. Nur die Umgebung hat sich geändert.

Anforderungen für zukünftige Arbeiten

Das Testen von Softwaresystemen ist eine komplexe Aufgabe, die noch nicht vollständig verstanden wurde. Es sind viele Themen betroffen, Themen wie:

- Testfälle spezifizieren

- Erzeugen von Testdaten

- Überwachung der Testdurchführung

- Messung der Testabdeckung

- Validierung der Testergebnisse

- Systemfehler verfolgen, etc.

Das Tool WSDLTest adressiert nur zwei dieser vielen Probleme – die Generierung von Testdaten und die Validierung der Testergebnisse. Ein weiteres Tool TextAnalyzer analysiert die Anforderungsdokumente, um die funktionalen und nicht funktionalen Testfälle zu extrahieren. Diese abstrakten Testfälle werden dann in einer Testfalldatenbank gespeichert. Es wäre notwendig, diese Testfälle irgendwie zu verwenden, um die Vor- und Nachbedingungsaussagen zu generieren. Das würde bedeuten, die Lücke zwischen der Anforderungsspezifikation und der Testspezifikation zu schließen. Das größte Hindernis dabei ist die Informalität der Anforderungsspezifikationen. Es geht darum, formale, detaillierte Ausdrücke aus einer abstrakten, informellen Beschreibung abzuleiten. Es ist das gleiche Problem, mit dem die Gemeinschaft der modellgetriebenen Entwicklung konfrontiert ist.

Eine weitere Richtung für zukünftige Arbeiten ist die Testüberwachung. Es wäre sinnvoll, den Weg einer Web-Service-Anfrage durch das System zu verfolgen. Das Werkzeug dafür ist TestDocu, ebenfalls von den Autoren. Dieses Tool instrumentiert die Serverkomponenten, um aufzuzeichnen, an welcher Anfrage sie gerade arbeiten. Durch die Behandlung einer Trace-Datei ist es möglich, die Ausführungsreihenfolge der Webservices zu überwachen, da an der Bearbeitung einer Anfrage oft viele Services beteiligt sind. Die größte verbleibende Aufgabe für die Zukunft ist es, diese verschiedenen Testwerkzeuge so zu integrieren, dass sie als Ganzes und nicht einzeln verwendet werden können. Das bedeutet den Aufbau eines generischen Test-Frameworks mit einer gemeinsamen Ontologie und standardisierten Schnittstellen zwischen den einzelnen Werkzeugen.

Es bleibt immer noch die grundsätzliche Frage, inwieweit der Test automatisiert werden sollte. Vielleicht ist es nicht so klug, den gesamten Web-Testprozess zu automatisieren, sondern sich stattdessen auf die Fähigkeiten und die Kreativität des menschlichen Testers zu verlassen. Die Automatisierung neigt oft dazu, wichtige Probleme zu verbergen. Die Frage der Testautomatisierung versus kreatives Testen bleibt ein wichtiges Thema in der Testliteratur 20.

Fazit

In diesem Beitrag wurde über ein Tool zur Unterstützung von Web-Service-Tests berichtet. Das Tool WSDLTest generiert Web-Service-Anforderungen aus den WSDL-Schemata und passt sie gemäß den vom Tester geschriebenen Vorbedingungs-Assertionen an. Es versendet die Anfragen und erfasst die Antworten. Nach dem Testen verifiziert es dann die Antwortinhalte gegen die vom Tester verfassten Post-Condition-Assertions.

Das Tool befindet sich noch in der Entwicklung, wurde aber bereits in einem eGovernment-Projekt eingesetzt, um das Testen von Webservices zu beschleunigen. Zukünftige Arbeiten werden in die Richtung gehen, dieses Tool mit anderen Testtools zu verknüpfen, die andere Testaktivitäten unterstützen.

- Kratzig, D. / Banke, K. / Slama, D.: Enterprise SOA, Coad Series, Prentice-Hall Pub., Upper Saddle River, NJ, 2004, p. 6

- Juric, M.: Business Process Execution Language for Web Services, Packt Publishing, Birmingham, U.K., 2004, p.7

- Sneed, H.: ” Integrating legacy Software into a Service oriented Architecture”, Proc. of 10th European CSMR, IEEE Computer Society Press, Bari, March, 2006, p. 5

- Tilley, S./ Gerdes, J./ Hamilton, T./ Huang, S./ Müller, H./Smith, D./Wong, K.: “ On the business value and technical challenges of adapting Web services”, Journal of Software Maintenance and Evolution, Vol. 16, Nr. 1, 2004, p. 31

- Perry,D./Kaiser,G.: “Adequate Testing and object-oriented Programming“, Vol. 5, No. 2, Jan. 1990, p. 13-19

- Nguyen, H.Q.: Testing Applications on the Web, John Wiley & Sons, New York, 2001, p. 11

- Musa, J./ Ackerman, A.: “Quantifying Software Validation”, IEEE Software, May, 1989, p. 19

- Berg,H./Boebert,W./Franta,W./Moher,T.: Formal Methods of Program Verification and Specification, Prentice-Hall, Englewood Cliffs, 1982, s. 3

- Martin, R.: “The Test Bus Imperative” IEEE Software, July, 2005, p. 65

- Editors: “Mercury Interactive simplifies functional testing” in Computer Weekly, Nr. 15, April, 2006, p. 22

- Editors: “Parasoft supports Web Service Testing”, in Computer Weekly, Nr. 15, April, 2006, p. 24

- Empirix Inc.: “e-Test Suite for integrated Web Testing”, www.empirix.com

- Fewster,M./Graham,D.: Software Test Automation, Addison-Wesley, New York, 1999, p. 248

- Howden, W.: ”Functional Program Testing”, McGraw-Hill, New York, 1987, p. 123

- Bradley, N.: “The XML Companion” Addison-Wesley, Harlow, G.B., 2000, p. 116

- Walsch, B.: “Understanding XSLT, DOM and when to use each”, SIGS DataCom, XML-ONE -Conference, München, Juli, 2001, p. 101

- Sneed, H. : Reverse Engineering of Test cases for selective Regression testing” Proc. Of CSMR-2004, IEEE Computer Society Press, Tampere, Finnland, March, 2004, p. 146

- Sneed, H. “Testing an eGovernment Website” Proceedings of the 7th IEEE International Symposium on Web Site Evolution (WSE2005: Budapest, Hungary; September 26, 2005). P.3. IEEE Computer Society Press, 2005.

- Ramamoorthy, C.; Ho, S. “Testing Large Software with Automated Software Evaluation Systems.” IEEE Transactions of Software Engineering. Vol. 1, pp. 46-58, March 1975.

- Sneed, H./Baumgartner, M./Seidl, R.: Der Systemtest„ Hanser Verlag, München/Wien, 2006